It has been an eventful week in ASP.NET Core land.

It started with the discovery by community member and ASP.NET Core contributor Kévin Chalet that a PR was being merged into the release branch of ASP.NET Core 2.0 Preview 1, rel/2.0.0-preview1. It was a significant PR in that it was changing the target framework monikers (TFMs) which denote compile targets. In this PR, the previous TFMs of netstandard1.x and net4x were changed to netcoreapp2.0. If this was being merged into a non-release branch, the community probably wouldn't have baulked, but in a release branch, this signals intent to change.

This means one thing in broad strokes:

ASP.NET Core 2.0 was dropping support for .NET Framework (

netfx), in favour of specifically targeting .NET Core (netcore) 2.0

This seemingly came out of nowhere community-wise (I'm pretty sure it would have been discussed extensively internally). Why would a message of compatibility that was sold to us with the release of ASP.NET Core 1.0, a message that ensured you could use the latest technology stack on both the stable and mature .NET Framework, and the fast-moving .NET Core, why would that message now change.

Naturally, the community as a whole was divided, some in favour, some completely objecting. Let's try and take an objective look at the pros and cons of this change.

For moving to .NET Core 2.0 only

The primary motivation as I understand of this change was around how quickly ASP.NET Core can move when we were promising support for .NET Standard compatability. .NET Standard was introduced to simplify compatability between libraries and platforms. Please refer to this blog post as a refresher. The idea is that we could take the .NET Framework, .NET Core, Mono and other implementations of a 'standard', and provide a meta library (the .NET Standard library) that provided a consistent API surface that you could target across all runtimes. Different versions of each runtime could provide support for a version of .NET Standard.

For instance, if I target netstandard1.4 I know my library should work on .NET Core 1.0 (as it supports up to .NET Standard 1.6), .NET Framework >= 4.6.1 and also UWP 10.0. This was great because .NET Standard ensures API compatibility for me, meaning I worry less about #if specific code to cater for specific build platforms.

.NET Standard is a promise.

But one thing that potentially wasn't considered when promising .NET Standard compatibility for ASP.NET Core and ensuring ASP.NET Core could run on both .NET Framework and .NET Core was that they both move at different speeds. So how can we take advantages of the new APIs in .NET Core (which would eventually be ported to .NET Framework, and defined in .NET Standard), when .NET Framework has a completely different release cadence? Remembering that .NET Framework changes happen slowly, because they need to be tested to ensure support across the billions of devices that currently run the .NET Framework.

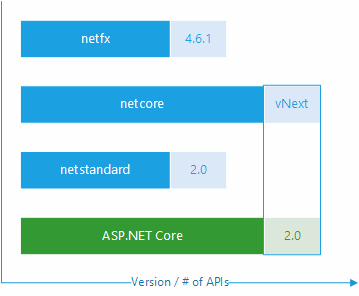

The above chart denotes the API surface of .NET Standard 2.0 and it's compatibility with .NET Framework 4.6.1 and .NET Core vNext, it does not express the full API surface available to netfx and netcore

.NET Core releases will come thick and fast in comparison to .NET Framework, so if we are promising this support to run ASP.NET Core on .NET Framework, we can't take advantage of new APIs implemented in a future version of .NET Standard until .NET Framework has been updated.

By sticking purely to .NET Standard, it means we are bound by the release cadence of the slower moving .NET Framework.

By unburdening ourselves from .NET Framework, it opens the door for get ASP.NET Core moving a lot quicker.

Only for parts of ASP.NET

Another thing to consider, is that it is not the complete ASP.NET Core 2.0 stack being re-targeted. Those libraries which are more likely to be consumed outside of the application model of web application (e.g. Microsoft.Extensions.*, Razor, etc.) will continue to target .NET Standard to ensure maximum compatibility.

Against moving to .NET Core 2.0 only

From what I see there are two big reasons why we should stick with .NET Standard 2.0:

By removing support for the .NET Framework means businesses with heavy investment in .NET Framework are cut out of the will. ASP.NET Core 2.0 means .NET Core or nothing, and there is no support for referencing

netcorelibraries fromnetfx. Coupling this with a short(ish) support lifetime for ASP.NET Core 1.x means that businesses implementing ASP.NET Core with their legacy .NET Framework components can not upgrade to ASP.NET Core 2.0.

This gives business only a couple of options - stick with .NET Framework and the ASP.NET stack (read: not Core), in which case your codebase becomes stagnant and very slow moving, or stick with ASP.NET Core 1.x which has a limited lifetime.By adopting a pattern of latest-framework-only, it may lead to future library authors also switching gears because their primary application model is ASP.NET Core 2.0. Leading by example could be damaging to the ecosystem in this world of promised compatibility (.NET Standard). For example, a library as prolific as JSON.NET, in some weird future where Jason determines that he also only wants to support future APIs in .NET Core? (This is only an example, I doubt this would actually happen!)

Where do we go from here?

At Build, Microsoft reaffirmed a commitment to delivering ASP.NET Core 2.0 for both .NET Core and .NET Framework, meaning targeting .NET Standard 2.0. This seems like backtracking, and in light of the amount of noise generated by the GitHub issue and in other channels, Microsoft have decided to please the masses. This is great in that they are listening to customers, but we also have to be realistic about this outcome.

What that now means is:

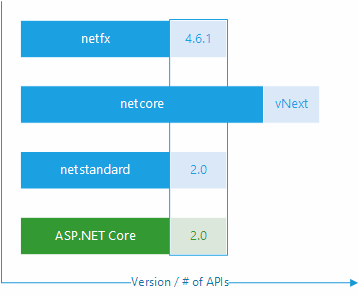

The above chart denotes the API surface of .NET Standard 2.0 and it's compatibility with .NET Framework 4.6.1 and .NET Core vNext, it does not express the full API surface available to netfx and netcore

The above chart denotes the API surface of .NET Standard 2.0 and it's compatibility with .NET Framework 4.6.1 and .NET Core vNext, it does not express the full API surface available to netfx and netcore

ASP.NET Core can only move as fast as .NET Standard in terms of using new APIs.

What about me?

I can't agree one way or another, both sets of arguments are completely valid, we must just accept the outcome and how that ensures maximum compatibility for all, but also limits advancements.

The one thing I would like to see out of all of this, is more visibility on these (major) decisions. Much like the C# language repos, new ideas are thrashed out in discussion before implementation - I feel ASP.NET Core needs to work like this for these critical issues, so perhaps this is an idea the team can take on board.